|

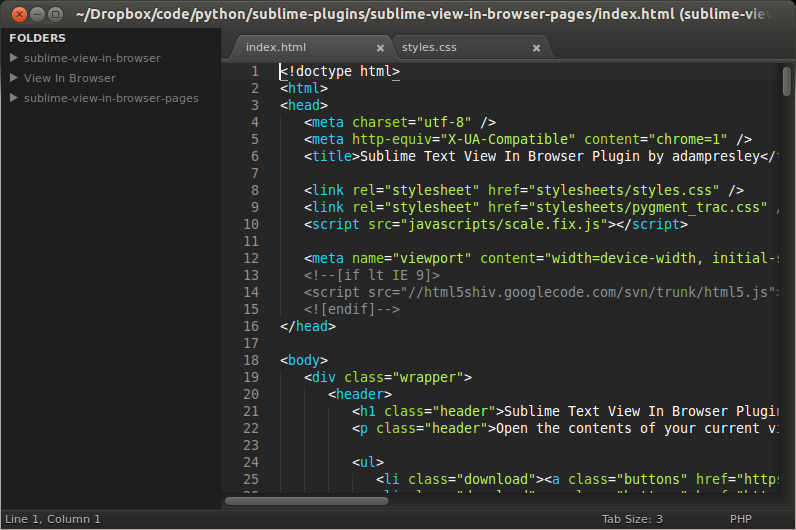

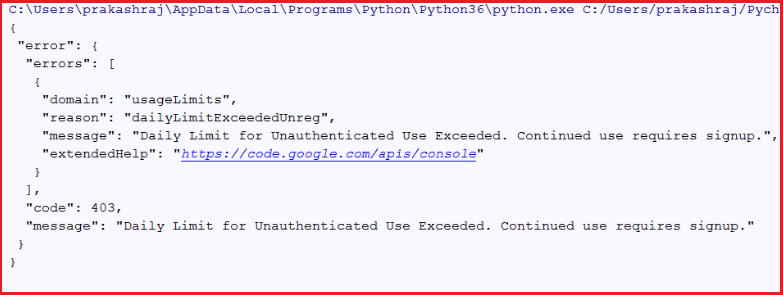

Instead of downloading a single file, it can recursively download files linked from a specific web page until all the links have been exhausted or until it reaches a user-specified recursion depth. Recursiveness: Using the proper parameters, Wget can operate as a web crawler. Wget owes its popularity to two of its main features: recursiveness and robustness. Wget is a convenient and widely supported tool for downloading files over three protocols: HTTP, HTTPS, and FTP. You’ll also learn about Wget’s limits and alternatives.

This article will show you the benefits of using Wget with Python with some simple examples. If you use it with Python, you’re virtually unlimited in what you can download and scrape from the web. Wget is a free twenty-five-year-old command-line program that can retrieve files from web services using HTTP, HTTPS, and FTP. One method that’s simple but robust is to interface with Wget. It’s commonly used for downloading images and web pages, with a variety of methods and packages to choose from.

In many contexts, such as automation, data science, data engineering, automation, and application development, Python is the lingua franca.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed